Exploring LLM Reasoning: Understanding Reasoning in Large Language Models

In recent years, the field of artificial intelligence has witnessed a remarkable evolution, particularly in the realm of large language models. This article aims to delve into the intricacies of LLM reasoning, exploring how these sophisticated models are transitioning from simple predictive text generators to active reasoners capable of tackling complex problems. We will examine the mechanics behind this shift, the training paradigms that enable it, and the practical implications for businesses and developers alike. This exploration will bridge the gap between academic AI research and the practical application of reasoning in large language models, offering insights for researchers, engineers, and business leaders.

The Shift in AI: From Predictive Text to Active Reasoning

The landscape of AI is undergoing a profound transformation, moving beyond mere pattern recognition to embrace genuine reasoning capabilities. Early language models struggled with seemingly simple tasks, highlighting their limitations in understanding and applying logical inference. This section explores this shift, contrasting the traditional “predictive text” approach with the emerging paradigm of “active reasoning,” as exemplified by models like OpenAI o1 and DeepSeek-R1. These models represent a significant leap forward, demonstrating an enhanced ability to reason through problems rather than simply regurgitating memorized patterns from a training dataset. This evolution signifies a new era in AI, where models are not just repositories of information but active problem-solvers.

The Strawberry Problem and Its Implications

The “strawberry problem” serves as a compelling illustration of the challenges faced by early language models. These models, when asked to count the number of occurrences of the letter ‘r’ in the word “strawberry,” often failed. This seemingly trivial task exposed a fundamental weakness: the inability to apply logical reasoning to solve problems. This limitation highlighted the difference between pattern recognition and true reasoning capabilities. Now, with advancements like chain-of-thought prompting techniques and the emergence of models like OpenAI o1 and DeepSeek-R1, the ability of LLMs to handle such reasoning tasks has improved drastically, marking a significant step forward in the evolution of AI.

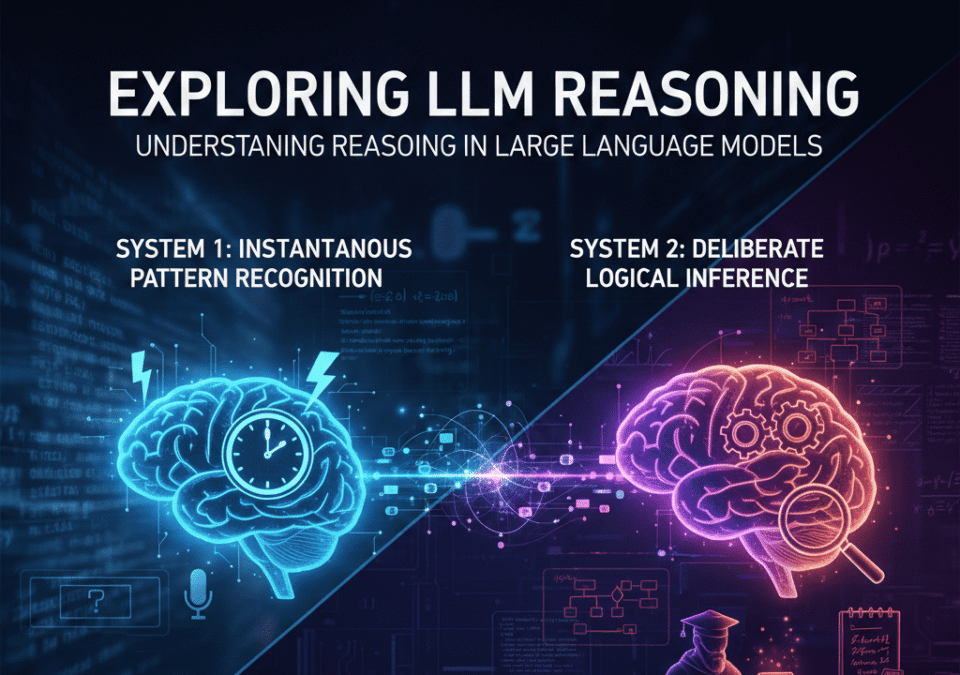

Defining Reasoning: Pattern Recognition vs. Logical Inference

To truly grasp the significance of advancements in LLM reasoning, it’s crucial to differentiate between pattern recognition and logical inference. Pattern recognition, often associated with “System 1” thinking, involves quickly identifying familiar patterns and associations based on past experiences. Logical inference, on the other hand, corresponds to “System 2” thinking, requiring deliberate, multi-step analysis to derive conclusions. MIT researchers have shown that the reasoning skills and abilities of LLMs are often overestimated when tested in counterfactual scenarios. This distinction underscores the critical role of logical reasoning in enabling AI to solve problems that go beyond simple memorization and recall. LLMs like GPT-4 are showing promise in moving towards more robust logical inference.

The Role of LLMs in Modern AI

Over the past few years, large language models have steadily risen to prominence in the field of modern AI. These models, trained on vast amounts of data, have demonstrated remarkable proficiency in a wide range of natural language reasoning tasks. Their ability to understand and generate human-like text has opened up new possibilities for various applications, from chatbots and virtual assistants to content creation and language translation. When scaled to a large enough size, LLMs have shown the potential to exhibit impressive reasoning abilities that far surpass previous models. The integration of LLMs into various industries and research domains is revolutionizing how we interact with technology and leverage the power of AI.

How LLM Reasoning Works

Chain-of-Thought (CoT) and Its Impact on Performance

Chain-of-Thought (CoT) prompting is a pivotal technique for enhancing the reasoning capabilities of LLMs. By encouraging the language model to “think out loud,” CoT elicits reasoning by decomposing complex reasoning tasks into a series of intermediate reasoning steps. This method contrasts sharply with single-stage prompting strategies

, where the model directly attempts to provide an answer without explicitly detailing its thought process. CoT essentially guides the LLM through a structured reasoning process, enabling it to tackle complex reasoning problems with greater accuracy. The chain of thought methodology allows LLMs to engage in multi-step reasoning, which significantly boosts performance on tasks that require careful deliberation.

Tree-of-Thought (ToT): Exploring Multiple Solutions

While Chain-of-Thought focuses on a single, linear reasoning path, Tree-of-Thought (ToT) expands the reasoning capabilities of LLMs by allowing the exploration of multiple potential solutions simultaneously. This approach enables the model to consider various reasoning steps and evaluate their respective outcomes before settling on a final answer. ToT is particularly effective for reasoning in language models when dealing with ambiguous or ill-defined problems where multiple approaches might be valid. This method allows the LLM to simulate a “branching” thought process, enabling it to navigate complex decision trees and ultimately select the most promising solution path. It allows for robust exploration of reasoning problems.

Self-Correction Mechanisms in LLMs

Self-correction mechanisms represent a cutting-edge advancement in LLM reasoning. These mechanisms enable the language model to critically evaluate its own reasoning process and identify potential errors or inconsistencies. By incorporating feedback loops and self-assessment modules, LLMs can iteratively refine their responses, leading to more accurate and reliable results. Recent research from OpenAI and other leading institutions are exploring methods to help language models cannot self-correct reasoning yet, although this is a focus. Although models cannot self-correct reasoning, the incorporation of such mechanisms is crucial for ensuring the robustness and trustworthiness of reasoning in large language models, paving the way for more sophisticated AI systems capable of tackling complex real-world challenges.

Training Paradigms: Why LLM Reasoning Works

The Importance of Reinforcement Learning in AI

Reinforcement learning plays a crucial role in cultivating advanced reasoning in AI systems, particularly within large language models. Unlike traditional supervised learning, where models are trained on labeled datasets, reinforcement learning involves training an agent to make decisions within an environment to maximize a reward signal. In the context of LLM reasoning, this means the language model is not only rewarded for providing the correct answer but also for the process of arriving at that answer. This incentivizes the development of robust reasoning capabilities, as the model learns to navigate complex problem spaces and make informed decisions. Training Language Models to Self-Correct via Reinforcement Learning will become more and more important as LLMs improve.

Inference-Time Compute: Allowing LLMs to Think Longer

Inference-time compute refers to the amount of computational resources a language model can utilize while generating a response. The longer a language model is allowed to “think” or compute during the inference stage, the better it can apply its reasoning capabilities to solve complex problems. This is especially critical for tasks that require multi-step reasoning, where the model must meticulously evaluate various options before arriving at a conclusion. By increasing the inference-time compute, we essentially provide the language model with the space to engage in a more deliberate and thoughtful reasoning process. This is why some models like DeepSeek-R1, when tested, appear to perform reasoning tasks much better.

Understanding Inference Scaling Laws

Inference scaling laws dictate the relationship between the amount of computational resources allocated during inference and the resulting performance improvements in reasoning tasks. These laws suggest that as we increase the inference-time compute, we can expect to see a corresponding increase in the reasoning abilities of LLMs. However, it’s important to note that the relationship is not always linear, and diminishing returns may occur as the computational resources increase. The new frontier for language models is inference, where there may be new abilities of LLMs to uncover. Understanding and optimizing inference scaling laws is crucial for maximizing the performance of reasoning in large language models and achieving state-of-the-art results on challenging benchmark datasets.

Benchmarks & Evaluation in LLM Reasoning

High-Intent Keywords: GSM8K, MATH, and HumanEval

When evaluating LLM reasoning, specific benchmarks serve as critical measuring sticks. High-intent keywords like GSM8K, MATH, and HumanEval are frequently used to assess reasoning skills. These benchmarks provide valuable insights into the strengths and weaknesses of different reasoning in language models and help guide further development efforts. These benchmarks serve as a yardstick for assessing the true reasoning capabilities of LLMs.

| Benchmark | Description |

|---|---|

| GSM8K | Focuses on grade school math problems, probing the mathematical reasoning abilities of language models. |

| MATH | Presents more complex mathematical challenges, requiring deeper reasoning capabilities. |

| HumanEval | Assesses code generation and reasoning skills, testing the model’s ability to produce functional code based on natural language descriptions. |

The Decline of MMLU: What’s Replacing It?

While MMLU (Massive Multitask Language Understanding) has been a popular benchmark for evaluating language model performance, its relevance is diminishing when it comes to specifically assessing reasoning in LLMs. MMLU focuses on a broad range of knowledge domains but doesn’t deeply probe the reasoning capabilities of LLMs. As models become more sophisticated, researchers are turning to more targeted benchmarks that specifically evaluate reasoning skills, such as GSM8K, MATH, and HumanEval. These benchmarks provide a more granular assessment of reasoning abilities and offer insights into the specific strengths and weaknesses of different reasoning in large language models, better reflecting the current focus on advancing true reasoning. These specific reasoning benchmarks are increasingly important for assessing the capabilities of LLMs, as opposed to relying solely on more generalized benchmarks.

Evaluating Reasoning Capabilities of LLMs

Evaluating the reasoning abilities of LLMs is a complex task that requires careful consideration of various factors. Researchers at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) have found that large language models “often struggle to handle more complex problems that require true understanding,” suggesting their reasoning abilities are often overestimated. Standard benchmark datasets may not accurately reflect real-world scenarios, and models can sometimes “game” the system by memorizing patterns rather than truly reasoning. To address these challenges, researchers are developing new evaluation methodologies that focus on counterfactual reasoning, logical consistency, and the ability to generalize to unseen reasoning tasks. These methods aim to provide a more comprehensive and nuanced assessment of the true reasoning capabilities of LLMs. Proper evaluation of reasoning in language models is crucial for advancing AI.

Practical Business & Development Implications

Use Cases: Debugging, Legal Synthesis, and Research

The advancements in LLM reasoning have profound implications for various business and development applications. One promising use case is complex debugging, where LLMs can analyze code and identify potential errors or vulnerabilities. In the legal field, LLMs can assist with legal document synthesis, generating contracts or briefs based on specific requirements. Furthermore, LLMs are being used in scientific research to analyze data, formulate hypotheses, and even design experiments. However, LLM’s ability with natural language reasoning has changed various fields and industries. These are just a few examples of how LLM reasoning is transforming industries and creating new opportunities for innovation. As the reasoning capabilities of LLMs continue to improve, we can expect to see even more transformative applications emerge.

The Cost Factor: Balancing Speed and Accuracy

While LLM reasoning offers significant advantages in terms of accuracy and problem-solving abilities, it’s essential to consider the cost factor. Reasoning models are often slower and more computationally expensive than “flash” models that prioritize speed. This trade-off between speed and accuracy must be carefully evaluated when deploying LLMs in real-world applications. For tasks where speed is critical, such as real-time customer service, a faster but less accurate model may be preferable. However, for tasks that require high precision, such as legal document review or scientific research, the additional cost of a reasoning model may be justified. Balancing speed and accuracy is crucial for optimizing the performance and cost-effectiveness of LLMs. With future models of LLMs becoming more efficient, the trade-off may be less noticeable.

Future Directions for LLMs in Various Industries

The future of LLMs in various industries is promising, with ongoing research and development efforts pushing the boundaries of what’s possible. As AI becomes increasingly ubiquitous in our society, it must reliably handle diverse scenarios, whether familiar or not. Researchers are exploring new architectures, training techniques, and evaluation methods to enhance the reasoning capabilities of LLMs and address their limitations. MIT researchers are researching the reasoning abilities of LLMs in novel tasks. These efforts aim to create more robust, reliable, and trustworthy AI systems that can tackle complex real-world challenges. As language models continue to evolve, we can expect to see them playing an increasingly important role in various industries, from healthcare and finance to education and manufacturing. These changes will alter the way we approach natural language reasoning and how humans interact with LLMs in the future.

What Are the Fundamental Reasoning Capabilities of Large Language Models?

The reasoning capabilities of large language models (LLMs) represent one of the most fascinating aspects of modern AI systems. At their core, these reasoning abilities encompass the capacity to process information, draw inferences, solve problems, and generate coherent responses that demonstrate understanding beyond simple pattern matching. LLM reasoning involves multiple dimensions including logical reasoning, mathematical reasoning, commonsense reasoning, and natural language reasoning. The capabilities of LLMs have evolved significantly, particularly since 2023, with models like GPT-4 demonstrating unprecedented proficiency in handling complex reasoning tasks. These systems can perform multi-step reasoning, decompose complicated problems into manageable components, and maintain consistency across extended logical sequences. However, it’s crucial to understand that the ability of LLMs to reason differs fundamentally from human cognition—these models operate through sophisticated statistical associations learned from vast amounts of training data rather than through conscious understanding or symbolic manipulation.

How Does Chain-of-Thought Prompting Enhance Reasoning in Large Language Models?

Chain-of-thought prompting (CoT) has emerged as a breakthrough technique for improving reasoning in large language models. This methodology works by encouraging LLMs to break down reasoning problems into intermediate reasoning steps, making the reasoning process explicit and transparent. When using chain-of-thought reasoning, the language model doesn’t jump directly to an answer but instead generates a step-by-step explanation that mirrors human problem-solving approaches. The technique of reasoning with language model prompting through CoT has proven particularly effective for mathematical reasoning and logical reasoning tasks where sequential thinking is essential. Research widely available on platforms like GitHub has demonstrated that chain-of-thought methods can dramatically improve performance on benchmarks designed to test reasoning capabilities of LLMs. By structuring prompts to elicit reasoning through intermediate steps, practitioners can help models tackle complex reasoning challenges that would otherwise prove too difficult. This approach essentially scaffolds the model’s ability to handle sophisticated reasoning tasks by breaking them into digestible components.

What Are the Primary Reasoning Tasks Used to Evaluate LLMs?

Evaluating reasoning abilities of LLMs requires a comprehensive suite of reasoning tasks that test different cognitive dimensions. The primary categories include mathematical reasoning, which assesses the ability to solve arithmetic problems, algebra, and complex calculations; logical reasoning, which evaluates deductive and inductive thinking patterns; and commonsense reasoning, which tests everyday knowledge and practical understanding. Researchers utilize specialized benchmarks and datasets to systematically measure these reasoning capabilities. Popular evaluation frameworks include datasets designed specifically for multi-step reasoning where the model must maintain coherence across multiple logical operations. Natural language reasoning tasks assess how well models like GPT-4 can understand context, draw inferences from text, and answer questions that require synthesis of information. The AI research community, including institutions like OpenAI, continuously develops new benchmarks to push the boundaries of what we can measure regarding.

🧭 Final Thoughts

LLM reasoning sits at the intersection of model architecture, training data, prompting technique, and evaluation methodology. Progress has shown that large language models can perform complex chains of thought, handle multi-step tasks, and benefit from structured prompts, but they still struggle with consistency, factual grounding, and transparent internal reasoning. Practical deployments therefore balance leveraging prompting strategies (chain-of-thought, self-consistency, tool use) and model selection with rigorous evaluation, monitoring, and human-in-the-loop oversight.

To make the most of llm reasoning, teams should adopt clear benchmarks, use hybrid approaches that combine symbolic or retrieval-based systems with LLMs, and design prompts that scaffold reasoning while minimizing shortcuts that produce plausible but incorrect outputs. Robust evaluation should include adversarial tests, calibration checks, and domain-specific metrics to catch failure modes early.

Looking ahead, improvements in model interpretability, better training objectives for reasoning, tighter integration with external knowledge and tools, and standardized evaluation frameworks will drive more reliable and useful reasoning capabilities. Until then, responsible application of llm reasoning requires transparent limits, continuous validation, and careful alignment with user needs and safety requirements.